June 15, 2023

Keywords: Assessments, Constructive Alignment, Learning Analytics, Learning Outcomes

Target readers: educators, researchers, educational leaders, learning designers

Authors: Abhinava Barthakur & Vitomir Kovanovic

Abhinava is a Postdoctoral Research Fellow at the Centre for Change and Complexity in Learning (C3L), University of South Australia. His research centers on exploring the dynamic relationship between AI-powered technologies and educational assessments. Specifically, Abhinava investigates how AI can be utilized to enhance and transform traditional assessment practices.

Vitomir is a Senior Lecturer at Education Futures, University of South Australia. His research interests include examining students’ learning strategies and self-regulated learning and assessing complex skills such as critical thinking and collaboration within different learning environments and educational settings.

ALIGNING ASSESSMENT WITH COURSE LEARNING OUTCOMES THROUGH LEARNING ANALYTICS

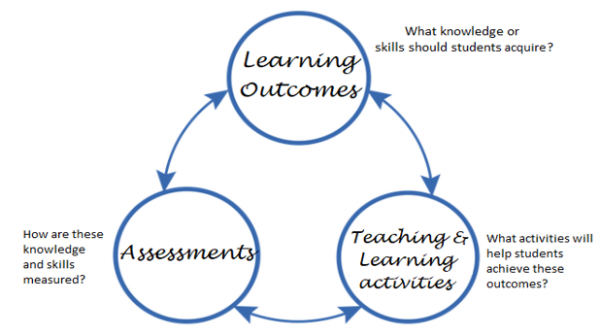

Constructive alignment in online courses

When it comes to effective learning, one factor of paramount importance are well-crafted and designed learning experiences that align with desired learning outcomes within courses and degree programs. This need is well captured by Biggs (1996) and his notion of constructive alignment. Inspired by constructivist theory, this visionary idea promotes an active and dynamic approach to learning in which students construct knowledge through immersive engagement with carefully crafted learning activities. The essence of constructive alignment lies in its powerful link to curriculum design, emphasizing the vital role of aligning learning activities and assessments with predefined learning outcomes.

Figure 1: Constructive alignment (adopted from Biggs, 1996)

In the realm of online education, constructive alignment is even more critical. With learners assuming greater autonomy and responsibility for their learning, online courses rely on asynchronous and decentralized modes of delivery, placing the decision-making firmly on students. The role of the instructor transforms into that of a facilitator, guiding and empowering students on their learning journey. In such settings, the absence of face-to-face interactions and direct instruction heightens the importance of course design with clear learning outcomes and expectations. The lack of such clarity can impede learning progress and hinder the quality of an online course. Therefore, the strategic alignment of learning outcomes with course assessments and activities becomes a pivotal factor in ensuring the success and effectiveness of online education.

How to ensure alignment?

There are several introspective instruments to evaluate constructive alignment between learning outcomes and learning tasks. Most existing tools focus on gathering the opinions of course designers and content experts. With such tools, constructive alignment and other design elements are reviewed against a fixed set of rubrics. For example, one popular instrument is the “Course Design Rubric Standards” by Quality Matters, which includes eight different standards and 40 specific benchmarks for assessing the quality of course design, including the alignment between learning outcomes and course activities and assessments. Blackboard Exemplary Course Program Rubric can similarly identify best practices of online course design, assessment, interaction, collaboration, and learner support. Finally, Balanced Design Planning allows the designing of new courses based on the opinions of instructors and course designers.

While these instruments have been widely accepted, they rely on the expert judgment of humans and as such related issues of subjectivity. Additionally, such approaches are very time-consuming and complex, and thus raise concerns of scalability. Hence, there is an urgent need to develop new approaches for examining constructive alignment that are more scalable and driven by evidence. In this regard, educational technologies and digital tools collect a wealth of data that offers richer insights into course design and its association with student success.

New methodological advances

Since the data about degree programs and courses is readily available in digital format, there is great potential in using learning analytics techniques to evaluate and improve the alignment between course outcomes and assessments. In our recent study, we investigated a section of constructive alignment, namely the alignment of course learning outcomes and assessments within a four-week professional development MOOC. Using students’ responses to open-ended questions, we showed how multidimensional item response theory (MIRT) models can be used to validate the alignment between course outcomes and assessments and identify ways to improve them.

The developed model only requires ordinally graded data (such as correct, partially correct, and incorrect) assessment data and the number of target learning outcomes (K) as the input, which are typically readily available from historical course offerings. Generally, the number of assessments is driven by the number of learning outcomes, with at least 2K-1 assessments needed to measure K learning outcomes (Close et al., 2012). The given 2K-1 assessments can be fitted with models including learning outcomes lesser than K depending on the learning context and check for more optimal mappings between learning outcomes and assessments. The different relative (e.g., Akaike Information Criterion, Bayesian Information Criteria) and absolute (e.g., Comparative fit index, Tucker-Lewis index, Root mean square error of approximation) criteria are then used to identify the quality of the alignment and also potentially identify a better alignment model.

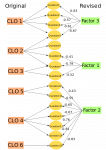

In our study, we examined how well fifteen assessment questions fit the original model with six learning outcomes and also examined alternative models that have between two and six learning outcomes. Our findings reveal that the original alignment was not optimal, and we identified two alternate alignments that better fit the data (Figure 2). While some assessments showed consistent alignment across all models, a few learning outcomes got “merged” in the revised models. To further confirm our concerns about the current alignment structure, we discussed our findings with content experts, who further validated our findings. Keeping human experts in the loop with such validation is critical, as the most useful alignment does not necessarily always match the statistically best-fitting model.

a) Three learning outcomes model – b) Four learning outcomes model

Figure 2: The evaluation of the alignment between learning assessments and learning outcomes. The left side of figure 2a) shows the initial alignment of six learning outcomes to course assessments, while on the right, we see the revised models with three learning outcomes. Similarly, figure 2b) shows the alternative model with four learning outcomes that equally fits the data.

Summary

There is a lot of potential in using learning analytics for making data-informed course design decisions. Our research showed an evidence-based approach for evaluating constructive alignment between learning outcomes and course assessments. The presented methodology uses historical assessment data that can be applied to various educational contexts and extended to other assessment forms. Finally, such an approach can be further expanded to evaluate the alignment between learning outcomes and different kinds of learning activities, even for the new courses with no initial alignment specification. In this regard, our approach shows the benefit of using data for making informed decisions about course design in a way that maximizes student learning outcomes and provides evidence for accurate assessment of student learning.

References

Biggs, J. (1996). Enhancing teaching through constructive alignment. Higher Education, 32(3), 347–364. https://doi.org/10.1007/BF00138871

Close, C. N., Davison, M. L., & Davenport, E. (2012). An exploratory technique for finding the Q-matrix in cognitive diagnostic assessment: Combining theory with data. Annual Meeting of the National Council on Measurement in Education, Vancouver, British Columbia, Canada.